Table of Contents

- What Are Depth of Field and Depth of Focus in Microscopy?

- Why Depth of Field Shrinks with Numerical Aperture and Magnification

- Depth of Focus at the Sensor: Eyepiece and Camera Tolerances

- How Wavelength and Refractive Index Influence Axial Sharpness

- Camera Pixel Size, Binning, and Sampling: Perceived Sharpness in Z

- Working Distance vs Depth of Field: Clearing Up a Common Confusion

- Trade-offs: Resolution, Contrast, Signal-to-Noise, and Depth

- Focusing Strategies and Z-Stack Planning Informed by DOF

- Common Misconceptions About Depth of Field in Microscopy

- Frequently Asked Questions

- Final Thoughts on Understanding Depth of Field and Depth of Focus

What Are Depth of Field and Depth of Focus in Microscopy?

In optical microscopy, two closely related—but distinct—concepts govern how much of a specimen appears sharp along the optical axis (the z-direction): depth of field and depth of focus. These terms are sometimes used interchangeably in casual conversation, but they describe different spaces and different tolerances. Understanding the difference will make your focusing decisions, imaging parameters, and expectations far more precise.

Artist: PaulT (Gunther Tschuch)

- Depth of field (DOF) refers to the axial range in object space—within the specimen—over which features are rendered acceptably sharp in the image. If you focus on a particular plane in a thick sample, the DOF describes how far above and below that plane objects can be while still looking “in focus.”

- Depth of focus refers to the axial range in image space—around the image plane near the sensor or eyepiece—over which the image remains acceptably sharp when the sensor or intermediate image plane is displaced. It is a tolerance of the imaging system that tells you how sensitive the image is to the exact position of the detector or projection plane.

Both quantities depend on the optics and the imaging criteria used to define “acceptably sharp.” In microscopy, these criteria are typically set by wave optics (diffraction) and by the detection or viewing system (camera pixel size, display magnification, or the observer’s eye resolution). Crucially, both DOF and depth of focus scale with numerical aperture (NA), and they connect through the system’s magnification.

An intuitive rule of thumb: depth of field is about the sample; depth of focus is about the sensor. Increase NA to resolve finer details and you typically decrease the depth over which details look sharp.

To set up the discussion, we’ll use these physically grounded ideas that you can revisit throughout this article via the internal links:

- DOF in object space scales approximately as nλ / NA², where n is the refractive index in object space and λ is the imaging wavelength. See why NA dominates DOF.

- Depth of focus in image space is larger by a factor that depends on magnification and the chosen image sharpness criterion. See sensor-side tolerances.

- Sampling by the camera (pixel size and binning) sets what blur is “acceptable,” strongly shaping perceived DOF. See camera sampling.

- Wavelength and immersion medium change axial sharpness by changing diffraction and NA. See wavelength and refractive index.

With those anchors, let’s explore how each parameter contributes and how to balance them for the kind of image you want to produce.

Why Depth of Field Shrinks with Numerical Aperture and Magnification

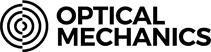

Depth of field is tightly connected to diffraction and the angular spread of light admitted by the objective lens, which is encoded by numerical aperture (NA). Numerical aperture, defined as NA = n sin(θ), where θ is the half-angle of the objective’s acceptance cone in the specimen medium of refractive index n, sets how tightly the point spread function (PSF) focuses light. A higher NA lens accepts rays at larger angles and forms a smaller, sharper diffraction-limited focus.

Because axial blur arises when the focus is displaced relative to the specimen, the axial tolerance scales inversely with the square of that angular spread. The commonly used scaling relationship for widefield microscopy in object space is:

DOF ∝ (n · λ) / NA²

Artist: Anaqreon

Interpretation:

- Increase NA (wider cone of rays) → decrease DOF (stricter axial tolerance for sharpness).

- Increase wavelength λ (longer light) → increase DOF (diffraction blur grows but tolerates more defocus before blurring past the acceptance criterion).

- Increase refractive index n on the object side → increase DOF for a fixed NA value; however, in practice, when you select an immersion medium with higher n you usually also select a higher-NA objective, and the NA² factor typically dominates the net effect on DOF.

So why does magnification come up so often in DOF discussions? In microscopy, objective magnification and NA are correlated in product lines: higher magnification objectives often have higher NA. That correlation can make it seem like magnification itself reduces DOF. Technically, for the diffraction-limited object-side DOF given above, magnification does not appear in the first-order scaling law; NA does. However, magnification enters when we define what “acceptably sharp” means in the image because magnification changes how defocus blur is sampled and displayed.

Specifically, the criterion for acceptable blur is frequently set by the smallest detail the camera or the eye can distinguish. A common approximate model adds a second term to DOF that depends on the imaging system’s acceptance of blur, which, in turn, depends on magnification M and a characteristic size of the detection element (e.g., pixel size or visual acuity):

DOF ≈ (n · λ) / NA² + (n · e) / (M · NA)

Here e represents a permissible blur diameter in image space (sometimes called the circle of confusion). The first term is set by diffraction; the second reflects the detection or viewing criterion. You do not need the exact constants to understand the behavior: increasing M makes the second term smaller, tightening the acceptable axial range because a given blur at the sensor becomes larger when projected or viewed at higher magnification. For modern digital imaging, camera sampling often dominates this second term.

In short:

- Objective NA is the primary optical driver of DOF in the specimen.

- Magnification influences perceived DOF by changing how blur is sampled and displayed, even when the optical DOF (diffraction term) is unchanged.

When you compare objectives, keep those roles distinct. If you want more of a thick specimen to look sharp at once, choose a lower NA (often, but not always, a lower magnification objective). If you want more axial sectioning and finer detail, choose a higher NA, expecting a shallower DOF and planning accordingly for focusing or z-stacking. For how these decisions interact with sensor tolerances, continue to depth of focus at the sensor.

Depth of Focus at the Sensor: Eyepiece and Camera Tolerances

Depth of focus is the axial tolerance around the image plane that still produces an acceptably sharp image. Move the camera slightly forward or backward relative to the nominal image plane; how much displacement can you allow before the image becomes noticeably blurred? That allowable range—the image-side axial tolerance—is the depth of focus.

Artist: Ernst Leitz (Firm)

In microscopy, depth of focus is related to the same optical ingredients: diffraction and an acceptability criterion for blur, now measured in image space. Two broad contributions are commonly distinguished:

- Diffraction-governed tolerance in image space scales with an effective f-number squared. In microscopy, the effective f-number depends on NA and magnification. Without relying on a single convention, the qualitative result is that the diffraction component of depth of focus grows with the square of the image-space f-number, which increases with magnification for a given NA.

- Detection-governed tolerance depends on the acceptable image-space blur criterion (e.g., the smallest perceivable blur on the camera). Larger sensor pixels or less demanding viewing enlarges the acceptable tolerance, increasing depth of focus.

A widely taught approximate relationship ties object-side depth of field and image-side depth of focus by magnification: for the same acceptability criterion, depth of focus is roughly magnification-squared times depth of field. That is, image-side tolerances get larger very quickly as magnification increases. This is conceptually intuitive: if the object-side axial tolerance is small, the corresponding blur projected into the image plane is larger by the magnification, and the image plane position has to be controlled accordingly. Conversely, in high magnification systems, even slight image-plane motion can stay within the larger depth of focus tolerance.

Practically, what does this mean for camera mounts, tube lenses, and adapters?

- Mechanical tolerances: Slight deviations in tube length or adapter spacing can be more forgiving at higher magnifications, because depth of focus in image space is larger. However, this does not relax focus accuracy at the specimen plane, where DOF is determined by NA and wavelength.

- Eyepiece projection vs direct camera coupling: When projecting through an eyepiece or relay optics, effective magnification at the sensor and the resulting acceptable blur size define the depth of focus. Careful alignment that minimizes tilt and maintains parfocality helps keep the system operating within its tolerances.

- Refocusing and z-step reproducibility: Although sensor-side tolerance can be large at high magnification, the object-side DOF is small, so fine (repeatable) z-positioning remains critical for consistent focus across a series. See z-stack planning for details.

For students and hobbyists, the main takeaway is to separate the two concepts: you can have a system with a generous image-side depth of focus while still having a very shallow object-side depth of field. The former relaxes how precisely you need to place the sensor; the latter determines how precisely you must position the specimen focus.

How Wavelength and Refractive Index Influence Axial Sharpness

Wavelength and refractive index shape diffraction and, therefore, axial sharpness. They appear together with NA in the scaling DOF ∝ (n · λ) / NA², but they also interact through how objectives are designed to achieve high NA.

Wavelength: longer light, larger tolerance

Longer wavelengths produce a wider diffraction pattern, slightly reducing lateral resolution. In axial terms, however, longer wavelengths also increase the defocus tolerance before the blur exceeds a given criterion, increasing DOF for the same NA. In practice, your choice of wavelength may be driven by sample properties, transmission, or fluorescence, but it is helpful to recognize this trend when evaluating axial sharpness across color channels.

Refractive index: immersion media and axial sectioning

The refractive index of the medium between the specimen and the front lens element—air, water, glycerol, or oil—affects both NA and the scaling of DOF. For a given physical lens geometry, higher-index immersion media support higher NA values. Very often, moving from air to an immersion medium increases NA sufficiently that the 1/NA² dependence dominates and reduces DOF substantially, even though the n in the numerator increases. This is not a contradiction; it reflects how immersion objectives achieve tighter focusing.

Artist: Thebiologyprimer

Immersion also affects refractive index matching through the sample and coverslip. A refractive index mismatch between the immersion medium, the coverslip, and the specimen can introduce spherical aberration. Spherical aberration broadens the PSF, degrading contrast and effective resolution, and it can change the apparent DOF in ways that are not directly predicted by the ideal nλ/NA² scaling. When using immersion systems, pay attention to the objective’s specified coverslip thickness and consider objectives with correction collars that let you compensate for small deviations. For a deeper dive into how this connects to focusing strategy, see focusing and z-stacks.

Color channels and chromatic focus shifts

In multi-wavelength imaging, objectives and tube lenses are corrected to bring different colors to a common focus, but small residual chromatic shifts can remain, especially off-axis. This means the best-focus plane can vary slightly with wavelength. The effect is usually small in well-corrected systems but is worth remembering if you notice that different color channels require slightly different z-positions to appear maximally sharp. Such color-dependent focus shifts can mimic DOF changes across channels.

Camera Pixel Size, Binning, and Sampling: Perceived Sharpness in Z

Even when optical DOF is set by NA and wavelength, the perceived DOF—the range of z positions that look sharp to you on screen—also depends on how the camera and display sample the image. Two images with the same optical DOF can look very different if one is undersampled and the other is well-sampled.

From pixel pitch to object-space sampling

In a microscope camera, the pixel pitch in sensor space maps to an effective sampling interval in object space according to total magnification. If p_cam is the camera’s pixel size and M_total is the total system magnification at the sensor, then the effective pixel size in object space is approximately:

p_obj ≈ p_cam / M_total

This relationship tells you how fine the camera samples details at the specimen. To faithfully represent features at the diffraction limit, the sampling interval should meet a Nyquist-like condition: features should span multiple pixels in the image. In practice, microscopy often aims for a few pixels across the characteristic size of the diffraction-limited spot to avoid aliasing and preserve contrast.

Sampling and the acceptable blur criterion

The acceptable blur diameter used in DOF expressions is effectively set by the detection system. A tightly sampled camera (small p_cam, large M_total) imposes a stricter criterion—much smaller blurs become visible—so the perceived DOF gets smaller, even when the optical DOF is unchanged. Conversely, if the sampling is coarse (large pixels, small total magnification), small focus errors may not be visible on screen, and perceived DOF appears larger.

Two implications follow:

- Changing the camera or projection without changing the objective can make the DOF appear to change. You are changing the detection criterion, not the underlying optics. This is one reason the second term in the DOF expression involves M and a detector-size parameter.

- Digital zoom changes only the display scale. It does not improve optical sampling and does not change DOF; it simply magnifies the sampled pixel grid, making blur more or less apparent on your screen.

Artist: Spencer Bliven

Binning and downsampling

Binning (hardware or software) combines adjacent pixels, increasing the effective p_cam. This reduces noise per effective pixel at the cost of sampling resolution, and it generally increases perceived DOF because the accepted blur diameter becomes larger. If your application tolerates lower spatial sampling and prioritizes signal-to-noise ratio, binning can make focusing less twitchy, at the expense of fine detail.

Aliasing, sharpening, and focus peaking

Undersampling can cause aliasing, which may mask or misrepresent fine patterns even when the focus is optimal. Likewise, software sharpening and focus peaking can make images seem crisper or help guide focusing, but they do not alter the underlying DOF. They enhance or reweight spatial frequencies in the sampled image, modifying appearance rather than underlying optics. When evaluating DOF or comparing imaging setups, disable aggressive post-processing and compare raw or minimally processed images for a fair assessment.

To see how sampling connects back to axial decisions like z-steps, continue to focusing strategies and z-stacking.

Working Distance vs Depth of Field: Clearing Up a Common Confusion

Working distance is the physical clearance between the front of the objective and the specimen when the specimen is in focus. It is a mechanical property of the lens design and does not, by itself, determine the optical depth of field. However, there is a practical association: lenses with long working distances are often lower NA lenses (to achieve such clearance and field coverage), and lower NA tends to increase DOF. That indirect correlation sometimes leads to confusion.

Key distinctions:

- Working distance: Mechanical clearance, helpful for maneuvering thick or irregular specimens and for adding tools like micropipettes or manipulators.

- Depth of field: Optical axial tolerance for sharpness in the specimen, set mainly by NA and wavelength.

- Field curvature and tilt: Separate from DOF, optical field curvature and specimen tilt can make different parts of the field come to focus at different z positions. A flat-field objective can help, but these are different issues than DOF.

When choosing an objective for a bulky specimen, you might pick a long-working-distance lens. Expect, because of the lower NA that typically accompanies such designs, that the DOF will be comparatively generous. But remember: you are trading axial sectioning and lateral resolution for access and tolerance.

Trade-offs: Resolution, Contrast, Signal-to-Noise, and Depth

Optical microscopy involves balancing limited physical resources: photon budgets, angular acceptance (NA), and sensor sampling. These balances surface clearly when you weigh depth of field against resolution and contrast.

Resolution vs depth: the NA lever

A higher NA improves lateral resolution (smaller diffraction-limited spot) but reduces DOF because defocus hurts a tightly focused spot more quickly. If your subject requires both fine detail and extended depth, two broad approaches are common:

- Optical compromise: Pick an intermediate NA that keeps axial tolerance manageable while still capturing fine-enough details for your question.

- Computational combination: Acquire multiple z-planes (a z-stack) with a high-NA objective and merge them into a single image using focus stacking or 3D reconstruction, so that you retain high-resolution data across depth. Details on step spacing appear in z-stack planning.

Contrast and signal-to-noise ratio (SNR)

DOF considerations are not isolated from contrast and SNR. When the focus plane slices through a semi-transparent specimen, out-of-focus light from above and below can reduce contrast in the in-focus structures. As NA increases, the objective collects higher-angle rays that can increase contrast for fine detail in the focal plane, but the narrower DOF means out-of-focus contributions may be more strongly blurred, potentially reducing background contrast if the system rejects them well. On the detection side, undersampling can suppress the visibility of faint high-frequency details, altering perceived DOF and contrast simultaneously.

For reflective or opaque samples in reflected-light microscopy, the same principles apply: a higher NA objective in reflection mode offers improved lateral detail at the cost of shallower DOF. Surface roughness and relief can make focusing sensitive; sufficient sampling and careful z-stepping can mitigate lost features.

Illumination intensity and exposure

Although illumination geometry is not the subject here, note that exposure choices (integration time and gain) shape noise and thus the practical visibility of faint details near the DOF boundaries. Longer exposure improves SNR up to the point limited by sample stability and camera well capacity. Noise reduction can make near-focus planes appear less noisy, which some observers interpret as an apparent change in DOF. It is not a change in optical DOF; it is a change in visibility at the blur threshold.

Specimen refractive structure

Different specimens scatter and absorb light differently across depth. Highly scattering regions can wash out in-focus contrast from adjacent planes, superficially narrowing the effective DOF for practical purposes. Clear, low-scattering specimens may appear to have a more forgiving DOF because out-of-focus contributions are less intrusive relative to the in-focus signal. These are contrast and SNR effects superimposed on the optical DOF.

Focusing Strategies and Z-Stack Planning Informed by DOF

Understanding DOF helps you make deliberate choices about focusing and z-stacking without relying on guesswork. While acquisition protocols vary widely by application, the physical relationships discussed so far motivate several general strategies.

Choose a focus anchor and define “acceptably sharp”

Before you assess DOF, decide what feature in your specimen defines acceptable sharpness. Is it the visibility of small textures, the separation of nearby edges, or the crispness of a boundary? This choice sets a blur criterion, which, combined with NA and wavelength, yields your operational DOF. Two practical tips follow from this:

- Use the most critical detail as your focus anchor. The DOF that preserves this detail is usually shallower than what suffices for larger structures.

- Match sampling to the detail. If your anchor feature lies near the diffraction limit, ensure your camera sampling meets a Nyquist-like condition as explained in camera sampling.

Interpreting axial resolution and DOF together

Axial resolution and DOF are closely related. A common scaling for the axial resolution in widefield imaging is proportional to (n · λ) / NA², which mirrors the DOF scaling. This does not mean they are identical; axial resolution often refers to the system’s ability to distinguish two nearby planes as separate peaks in axial intensity, whereas DOF refers to the range of z positions over which a single plane is rendered acceptably sharp according to a chosen criterion. In practice, the two are of similar order for a given NA and wavelength, so planning z-steps on the order of that characteristic axial scale tends to capture enough information for stacking or 3D reconstruction.

Artist: QuodScripsiScripsi

Planning z-step spacing

When collecting a z-stack for focus fusion or deconvolution, the step size should be small enough that you do not “leap over” important axial information. A practical way to think about it:

- Step size smaller than the characteristic axial blur width keeps successive planes overlapping in content, which improves the fidelity of merging algorithms.

- Trade-off between total steps and noise: Smaller steps capture more planes, increasing acquisition time and data volume. Ensure that exposure, sample stability, and processing capabilities can support the plan.

- Sampling symmetry: Collect planes above and below the focus anchor over at least the axial extent of interest so that the final reconstruction does not bias toward a single side of focus.

These points stem directly from DOF and axial resolution physics without prescribing a one-size-fits-all protocol. If your purpose is 2D focus stacking for extended depth in a static specimen, prioritize covering the axial range where features of interest exist, using a z-step that captures the changing sharpness of the smallest important features. If your aim is quantitative 3D imaging, align step sizes with the optical section thickness implied by your NA and wavelength, and ensure lateral sampling meets Nyquist-like criteria as well.

Focus stability and hysteresis

Mechanical focus drives can exhibit backlash or hysteresis. While this is not an optical phenomenon, it interacts with DOF: a shallow DOF leaves little room for mechanical inconsistencies. When reproducing focus positions across a series, approach the setpoint from the same direction each time, check for drift, and verify that the apparent sharpness of your anchor feature remains consistent across repeated passes. These steps help you stay within the object-side DOF that your optics allow.

Specimen considerations

Thicker specimens can contain structures at many depths within a field of view. A shallow DOF can be beneficial if your goal is to isolate a single plane. If instead you want a comprehensive view, DOF will limit how much appears sharp in a single exposure. In that case, plan a stack that samples through the volumes containing important features or select a lower-NA objective to enlarge DOF at the cost of fine axial sectioning. The correct choice depends on whether the application prizes isolation of slices or accumulation of depth.

Common Misconceptions About Depth of Field in Microscopy

Several misconceptions circulate around DOF and can lead to confusing results. Clarifying them will help you diagnose what you see at the eyepieces or on the screen.

- “Magnification alone controls DOF.” In microscopy, it is NA that primarily controls object-side DOF. Magnification contributes to perceived DOF via sampling and display scale, but the dominant optical term is set by NA and wavelength.

- “Long working distance means large DOF.” Long working distance is a mechanical property; many long-working-distance objectives also have lower NA, which increases DOF. The correlation is common but indirect. See working distance vs DOF.

- “Sharper at the edges means different DOF.” Edge sharpness often indicates field curvature or aberrations, not a change in DOF. A truly uniform DOF assumes uniform optical performance across the field.

- “Post-processing changes DOF.” Deconvolution and sharpening can improve contrast and restore some lost detail, but they do not change the underlying optical DOF captured at acquisition. They can, however, change how blur is perceived.

- “Changing color has no effect on DOF.” Wavelength affects diffraction, thus DOF, and chromatic focus shifts can move the best-focus plane slightly with color. See wavelength effects.

Frequently Asked Questions

Is depth of field always worse at higher magnification?

Not strictly because of magnification itself. Object-side DOF scales primarily with NA and wavelength. Many higher-magnification objectives also have higher NA, which reduces DOF; this pairing creates the common experience that higher magnification yields shallower DOF. If two objectives had the same NA but different magnifications, their diffraction-limited DOF in object space would be similar, but perceived DOF would still differ because of sampling and display scale. For more context on the dominant role of NA, see the NA section.

Why does a stereo microscope seem to have a large DOF compared to a compound microscope?

Stereo microscopes generally operate at low NA to provide wide fields of view and long working distances. Low NA increases DOF according to the nλ/NA² scaling. Compound microscopes, used for high-resolution imaging of thin sections or small features, use higher NA to resolve fine detail, which reduces DOF. The difference you notice arises from these NA choices, not from an intrinsic property of the instrument class. For a discussion of how working distance relates indirectly to DOF, see working distance vs DOF.

Final Thoughts on Understanding Depth of Field and Depth of Focus

Depth of field and depth of focus are two sides of how microscopes form sharp images along the optical axis. Object-side DOF tells you over what specimen depth your features remain acceptably sharp; image-side depth of focus tells you how tightly the image plane must be held to keep the image sharp at the sensor or eyepiece. They are governed by diffraction, numerical aperture, wavelength, and by how the detection system defines and samples permissible blur.

Three practical takeaways align with the core physics outlined above:

- NA is your primary control for DOF in the specimen: higher NA gives finer detail but reduces DOF; lower NA increases DOF but sacrifices axial sectioning and lateral resolution.

- Sampling defines perceived sharpness: pixel size, binning, and total magnification at the camera determine how much blur you actually see, changing perceived DOF even when optical DOF is constant.

- Plan focus and z-steps deliberately: choose a focus anchor, match sampling to your smallest important features, and space z-steps so successive planes overlap sufficiently to capture axial information without unnecessary redundancy.

Use these principles to select objectives, set up cameras, and plan image acquisition purposefully. If you need extended depth and fine detail, consider a high-NA stack with appropriate z-sampling and computational fusion. If you need generous DOF for overview work or for tall specimens, select a lower NA objective and ensure camera sampling is matched to the level of detail you want to record.

For more deep-dives into optical fundamentals—like how lateral resolution limits relate to sampling, or how refractive index mismatches introduce spherical aberration—explore our related articles and subscribe to our newsletter. You’ll get weekly insights on microscopy fundamentals, instrument types, accessories, buying criteria, and applications, delivered in a clear, technically accurate format.