Table of Contents

- What Do Numerical Aperture, Resolution, and Magnification Mean in Light Microscopy?

- How Resolution Arises: Diffraction, Wavelength, and the Abbe Limit

- Numerical Aperture Deep Dive: Collection Angle, Immersion Media, and Refractive Index

- Magnification vs. Resolving Power: Avoiding Empty Magnification

- Contrast and Illumination: Köhler, Brightfield, Darkfield, Phase, and DIC Basics

- Sampling and Cameras: Pixel Size, Nyquist, and Digital Resolution

- Objective Lens Trade-offs: Working Distance, Field Number, Aberrations, and Cover Glass

- Calibrating Magnification and Measuring Features Correctly

- Common Microscopy Misconceptions and How to Fix Them

- Frequently Asked Questions

- Final Thoughts on Choosing the Right Optical Parameters for Microscopy

What Do Numerical Aperture, Resolution, and Magnification Mean in Light Microscopy?

Three terms dominate nearly every discussion of optical microscopy: numerical aperture (NA), resolution, and magnification. They interact in predictable ways grounded in physical optics, and understanding their relationships is the key to selecting objectives, configuring illumination, and interpreting the images you see or record. This section defines each term precisely and sets the stage for deeper dives in diffraction-limited resolution, the role of NA and immersion media, and why more magnification is not always better.

Artist: Ernst Leitz (Firm)

Numerical aperture (NA) is a measure of the light-gathering ability and angular acceptance of an optical system, typically an objective lens or condenser. By definition, for an objective focused into a medium of refractive index n, NA = n · sin(θ), where θ is the half-angle of the maximal cone of light captured by the lens from the specimen. A higher NA means the objective collects light over a wider angle, improving its ability to resolve fine details and transmit more signal from high spatial frequencies.

Resolution is the smallest separation between two features at which they can be distinguished as separate. In incoherent, diffraction-limited brightfield imaging, lateral resolution is fundamentally limited by diffraction and depends on wavelength and NA. Two commonly cited criteria are:

- Abbe limit: the minimum resolvable period of a line grating is approximately

λ / (2 · NA). - Rayleigh criterion: the distance between the centers of two point sources that can be just resolved is approximately

0.61 · λ / NA.

These expressions differ by a constant factor and both articulate the same key dependence: shorter wavelengths and higher NA yield finer resolution. Axial (depth) resolution behaves differently—its scale is larger than the lateral resolution and, in widefield imaging, is often approximated by δz ≈ 2 · n · λ / NA², again showing that higher NA substantially improves sectioning in the z-direction.

Magnification specifies how large the image appears relative to the object. In an optical microscope, magnification is usually the product of objective magnification and the magnification of the intermediate optics (eyepiece or camera relay). But magnification by itself does not create new detail; it merely scales the image. The important distinction is that resolution defines the finest detail that can be formed by the optics, while magnification determines how big that resolvable detail appears to your eye or sensor. This is the crux of avoiding empty magnification.

Because clarity often hinges on the relationship among these three concepts, we will build from first principles—diffraction and wave optics—to show why NA is the lever that sets ultimate resolving power, how the illumination system and contrast methods influence what you can see, and how to sample an image digitally without leaving resolution on the table.

How Resolution Arises: Diffraction, Wavelength, and the Abbe Limit

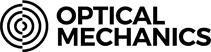

Even a perfect, aberration-free lens does not form a perfect point image of a point object. Due to the wave nature of light, the image of a point source is a diffraction pattern with a bright central spot (the Airy disk) surrounded by rings. The diameter of that central spot, and the spacing between adjacent diffraction patterns from neighboring points, set a hard limit on how close two details can be before they blur into one.

Artist: Anaqreon

To quantify this, we rely on spatial frequency arguments and classical results from Abbe and Rayleigh. For incoherent imaging of transparent or partially absorbing specimens, the optical transfer function defines a cutoff spatial frequency in object space of approximately f_c = 2 · NA / λ (cycles per unit length). Structures with spatial frequencies higher than f_c cannot pass through the imaging system because the objective does not collect the diffracted orders required to reconstruct them. Abbe’s analysis of periodic structures therefore yields a minimum resolvable period d ≈ 1 / f_c ≈ λ / (2 · NA).

Rayleigh considered the overlap of two Airy patterns and proposed that two point sources are just resolvable when the first minimum of one pattern coincides with the maximum of the other. This criterion gives a slightly different constant: d ≈ 0.61 · λ / NA. Although derived differently, both expressions encode the same physics. Practically, either form can be used as a quick estimate of lateral resolution when you know NA and the dominant wavelength in your imaging modality.

This image uses a nonlinear color scale (specifically, the fourth root) in order to better show the minima and maxima.

Artist: Spencer Bliven

Wavelength dependence. Blue light (~450 nm) yields better diffraction-limited resolution than green (~550 nm) or red (~650 nm) light because the minimum resolvable distance d scales with λ. This is why fluorescence imaging of blue-emitting dyes can, in principle, resolve smaller details than red-emitting dyes when using the same objective and NA. However, illumination, detection sensitivity, and specimen properties often constrain the feasible wavelength range, so the theoretical advantage is not always realized.

Axial resolution is governed by the spread of the point-spread function (PSF) along the optical axis. In widefield microscopy, a commonly used approximation for axial resolution is δz ≈ 2 · n · λ / NA², where n is the refractive index of the immersion medium at the sample. Two trends are important:

- Axial resolution improves strongly as NA increases, scaling with

1 / NA². - For a given NA, imaging in a higher refractive index medium yields a thicker PSF axially, reflected in the factor

nin the numerator. However, immersion media also influence the achievable NA; see Numerical Aperture Deep Dive for context.

Coherence and contrast method caveats. The specific constants in the resolution expressions can shift with coherent imaging or confocal detection. In coherent illumination (e.g., laser with spatial coherence), the lateral cutoff frequency is closer to NA / λ, which halves the bandwidth relative to incoherent imaging. Confocal point-scanning can sharpen the PSF laterally and axially compared to widefield, but still remains fundamentally diffraction-limited under linear optics. Regardless of modality, the key lesson persists: capturing higher-angle diffracted light—i.e., increasing NA—pushes the resolution limit finer.

Finally, remember that optical resolution is necessary but not sufficient for visibility. Two features may be within the diffraction limit yet remain indistinct if contrast is poor. That is why illumination and contrast strategies, such as those outlined in Contrast and Illumination, are inseparable from resolution in practical microscopy.

Numerical Aperture Deep Dive: Collection Angle, Immersion Media, and Refractive Index

Numerical aperture encapsulates two factors: geometry and medium. Geometrically, a lens that accepts a wider cone of light from the specimen collects more of the diffracted orders that encode high spatial frequencies. Physically, light refracts at interfaces and propagates differently depending on the medium’s refractive index, n. NA brings both aspects together in a single value: NA = n · sin(θ).

Air, water, glycerol, and oil immersion. Because sin(θ) is bounded between 0 and 1, the maximum achievable NA in a medium cannot exceed its refractive index. In practice:

- Air objectives (n ≈ 1.00) commonly reach NA values around 0.95 to 0.99 at the high end. They are convenient and versatile, but their maximum NA is limited by the low refractive index of air.

- Water-immersion objectives (n ≈ 1.33) can attain NA values in the ~1.0–1.2 range while minimizing refractive index mismatch with aqueous specimens. They are useful for live-cell and thick-sample imaging in aqueous media.

- Glycerol-immersion objectives (n ≈ 1.47) provide an index closer to many cleared or high-index mounting media, reducing spherical aberration for such samples.

- Oil-immersion objectives (n ≈ 1.515 for standard immersion oils) routinely achieve the highest NA values in standard light microscopy, often in the 1.3–1.4+ range, enabling the finest diffraction-limited resolution.

Artist: Thebiologyprimer

Changing immersion media does more than alter NA limits. Index matching between the specimen, cover glass, and immersion medium also reduces spherical aberration. When the refractive indices differ substantially, rays traveling through different parts of the pupil experience different path lengths, degrading the point-spread function and softening contrast. This is why some high-NA objectives include a correction collar to compensate for small variations in cover glass thickness and index; see Objective Lens Trade-offs for details.

Condenser NA matters too. In transmitted-light techniques, the condenser’s NA should be similar to the objective’s NA to fully illuminate the high-angle diffracted orders that the objective can collect. For brightfield under Köhler illumination, matching the condenser aperture to the objective NA typically maximizes resolution and contrast balance. Stopping down the condenser reduces glare and increases depth of field but also discards high-angle illumination, reducing the system’s transfer of high spatial frequencies. As discussed in Contrast and Illumination, departures from this matching are purposeful in methods such as darkfield.

Depth of field and NA. As NA rises, the depth of field (the axial range over which features remain in acceptable focus) shrinks. This trade-off is an inevitable consequence of tighter focusing of light into a smaller lateral spot. For thin, flat specimens, high NA is desirable; for thick or uneven specimens, you may accept a lower NA to broaden depth of field and reduce out-of-focus blur, or you may use optical sectioning methods if appropriate to your modality.

Practical constraints. High-NA, high-magnification objectives often exhibit shorter working distances and narrower fields of view. They may also be more sensitive to cover glass thickness and specimen proximity. These constraints do not diminish the benefits of NA; rather, they shape the practical envelope of where high-NA imaging excels. Many users maintain a set of objectives spanning NA, magnification, and immersion types to address diverse specimens and tasks.

Magnification vs. Resolving Power: Avoiding Empty Magnification

It is tempting to equate a bigger image with better detail, but magnification only scales what the optics have already formed. Once the image is limited by diffraction and aberration, increasing magnification merely spreads the same information over more pixels or a larger retinal area. The result is empty magnification: a larger, blurrier rendition without new resolvable content.

Useful magnification rule-of-thumb. To keep magnification commensurate with the information content of the optical image, a practical rule is to aim for total visual magnification between roughly 500× and 1000× the objective’s NA. Staying in this range ensures that the finest resolvable details are enlarged enough to be appreciated without unnecessarily diluting brightness or field of view. For example, an objective with NA 0.65 typically supports useful visual magnification up to the few-hundred-times range without entering empty magnification. Pushing to far higher total magnification may make the image dim and grainy with no added detail.

Total magnification vs. effective sampling. With cameras, the limit is not the eyepiece but the pixel grid. The question becomes: are you sampling the optical image at or above the Nyquist rate for the optics’ spatial frequency cutoff? This is discussed in depth in Sampling and Cameras, but the idea mirrors the visual case—sample densely enough to represent the detail the optics can deliver, but not so densely that you only increase file sizes without capturing new content.

Brightness and noise considerations. Higher magnification spreads the same photon flux over a larger area on the sensor or over more of the retina. In a camera, that can result in fewer photons per pixel at a given exposure, reducing signal-to-noise ratio unless exposure time or illumination intensity is increased. Visually, it makes the image dimmer. Because diffraction already limits the smallest spot size, increasing magnification beyond what sampling or the eye can usefully discriminate simply trades away brightness and signal-to-noise.

When higher magnification is warranted. There are cases where increased magnification is appropriate even if the nominal resolution has been reached—for example, to make small features easier to annotate, to increase measurement precision on the screen, or to match the spatial sampling to the sensor’s pixel size. But the key is to ensure you are not mistaking a bigger image for a more detailed image. If you haven’t increased NA, shortened wavelength, or reduced aberrations, you haven’t improved resolution.

In short, pair magnification with the resolving power set by NA and wavelength. When in doubt, revisit the relations in How Resolution Arises and match your system to those limits.

Contrast and Illumination: Köhler, Brightfield, Darkfield, Phase, and DIC Basics

Resolution describes the finest separable detail, but contrast determines whether that detail is actually visible. Illumination geometry and contrast methods redistribute light and phase information so that small differences in specimen structure emerge in the image. Here we summarize how major approaches influence the image, without prescribing laboratory procedures.

Köhler illumination. Köhler is a method of aligning the microscope so that the specimen is evenly illuminated with a field of spatially uniform intensity and appropriate angular distribution. The condenser aperture diaphragm sets the angular range of illumination (thus affecting resolution and depth of field), while the field diaphragm helps control the illuminated area. Under Köhler, the condenser can be adjusted to match the objective’s NA to maximize the transfer of high spatial frequencies, as discussed in Numerical Aperture Deep Dive.

Artist: ZEISS Microscopy from Germany

Brightfield. In brightfield, contrast arises primarily from absorption, scattering, and refractive index variations that alter amplitude and phase. Transparent specimens with little absorption may show weak contrast, even if the optics could resolve fine details. Closing the condenser aperture can boost contrast but reduces high-frequency transfer, trading off resolution for visibility.

Darkfield. Darkfield blocks the central (undeviated) beam and illuminates the specimen with oblique rays. Only light scattered or diffracted by the specimen enters the objective, rendering the background dark and small scatterers bright. The method effectively emphasizes high spatial frequencies but can be sensitive to dust and imperfections. The condenser NA in darkfield must exceed the objective NA so that direct light is excluded while scattered light is captured.

Phase contrast. Many biological specimens are nearly transparent in amplitude but introduce spatially varying optical path length, i.e., phase shifts. Phase contrast converts these phase variations into intensity differences by selectively retarding and attenuating the undeviated (background) light relative to diffracted light using phase annuli and rings. The technique preserves fine detail without staining and avoids the need to stop down the condenser excessively, thus maintaining better resolution than overly constricted brightfield.

Differential interference contrast (DIC). DIC uses polarized light and beam-shearing prisms to form two closely spaced, laterally displaced images that interfere at the detector. Differences in optical path length gradient across the shear appear as intensity variations with a pseudo-3D relief effect. DIC is highly sensitive to subtle gradients and, like phase contrast, can preserve high-resolution information when correctly configured. Note that sample birefringence and plastic components in the optical path can affect DIC performance.

These contrast methods do not circumvent diffraction limits, but they can make details at or near the limit more visible. Selecting the right method depends on specimen properties and the information you need. If the theoretical limit suggests your detail is marginally resolvable with the available NA, a phase-based contrast method may be the difference between seeing it and missing it.

Sampling and Cameras: Pixel Size, Nyquist, and Digital Resolution

In digital microscopy, the camera’s pixel grid samples the continuous image formed at the intermediate image plane. To avoid losing information, the sampling must be fine enough to capture the highest spatial frequencies that the optics can pass. This is the domain of the Nyquist–Shannon sampling theorem applied to spatial imaging.

Effective pixel size in object space. A camera pixel of physical size p_sensor (e.g., 3.45 µm) corresponds to a projected size on the specimen plane of p_object = p_sensor / M_eff, where M_eff is the effective magnification from the specimen to the sensor. For an infinity-corrected system with a camera relay, M_eff depends on the objective’s nominal magnification (set by the ratio of tube lens focal length to objective focal length) multiplied by any additional relay optics in the camera port. Regardless of details, the ratio p_sensor / M_eff captures how finely the specimen is sampled.

Nyquist criterion for microscope imaging. For incoherent imaging, the highest spatial frequency in object space that the optical system can transmit is approximately f_c ≈ 2 · NA / λ. To sample without aliasing, the pixel sampling frequency in object space must be at least twice this value. The corresponding Nyquist-limited pixel size on the specimen is therefore:

p_object ≤ λ / (4 · NA)

As a sanity check, note that Rayleigh’s lateral resolution estimate is d ≈ 0.61 · λ / NA; sampling with p_object around one-third of d gives roughly 2–3 pixels across the Rayleigh resolution element, which is consistent with common practical guidance for digital microscopy.

Example. Suppose your objective has NA = 0.65 and you image green light around λ = 550 nm. The Nyquist-limited pixel size on the specimen is p_object ≤ 550 nm / (4 · 0.65) ≈ 212 nm. If your camera has 3.45 µm pixels and your effective magnification is 20× at the sensor, then p_object = 3.45 µm / 20 = 0.1725 µm (172.5 nm), which comfortably meets Nyquist. Reducing effective magnification to 10× would give p_object ≈ 345 nm, which undersamples the optics for this wavelength and NA; you would begin to lose the highest spatial frequencies.

Color, binning, and demosaicing. Color cameras with Bayer filters sample each color channel on a subset of pixels. The effective sampling for each wavelength band is therefore different than for a monochrome sensor with the same pixel pitch. Demosaicing reconstructs a full-color image but does not create detail beyond what the CFA sampling supports. Hardware binning (summing adjacent pixels) increases signal at the cost of spatial sampling and should be used with awareness of how it moves you relative to the Nyquist condition.

Optics first, pixels second. If your system is optically limited—by NA, aberrations, or wavelength—then using a higher-resolution sensor cannot recover detail the optics never delivered. It can still be useful for larger fields of view or post-processing, but it will not improve optical resolution. Conversely, if your optics can pass more detail than your sampling captures, upgrading pixel size or increasing effective magnification at the sensor may yield a direct improvement. This underscores the fundamental balance outlined in Magnification vs. Resolving Power.

Field of view considerations. The camera’s active area, combined with the microscope’s field number and relay optics, sets the imaged field of view. Increasing magnification to meet Nyquist reduces the field; decreasing magnification increases the field but risks under-sampling. Many systems exploit camera adapters with intermediate magnifications (e.g., 0.5×, 1×, 1.5×) to tune this balance to the sensor size and the objectives in use.

Objective Lens Trade-offs: Working Distance, Field Number, Aberrations, and Cover Glass

Objectives embody complex design choices that trade field flatness, chromatic correction, working distance, and cost against NA and magnification. Understanding these trade-offs helps you pick the right optic for the task and avoid unintended compromises in resolution or contrast.

Working distance (WD) vs. NA. As NA increases, objectives usually require shorter working distances to admit higher-angle rays. Long working distance objectives are engineered to provide extra clearance for specimens or equipment, but they often do so with lower NA at a given magnification. When imaging at the diffraction limit is essential, prioritize NA; when clearance and maneuverability matter, a long WD design may be the better fit.

Field number and field of view. The field number (FN) of the eyepiece indicates the diameter of the intermediate image that can be observed without vignetting, expressed in millimeters. For visual observation, the diameter of the field of view on the specimen is approximately FOV ≈ FN / M_obj, where M_obj is the objective magnification. For camera-based viewing, the field is governed by sensor size and the relay magnification, but the concept is analogous: higher magnification reduces the field for a given image circle.

Planarity and aberration correction. Objectives are labeled to indicate the balance of corrections they provide:

- Achromat objectives correct chromatic aberration at two wavelengths and are common in education and routine imaging. They may show field curvature and residual color fringing at high contrast edges.

- Plan-achromat objectives add field-flattening correction so that the image remains in focus across a larger portion of the field.

- Fluorite (semi-apochromat) objectives typically offer higher NA and better color correction than achromats, making them suitable for fluorescence and demanding brightfield imaging.

- Apochromat objectives provide advanced chromatic and spherical aberration correction across multiple wavelengths with flat fields, often at the highest NA values available for a given magnification. They are preferred for critical imaging where color fidelity, contrast, and resolution are paramount.

No label guarantees perfection; manufacturing tolerances, coverslip variations, and the rest of the optical train still matter. Yet, understanding these categories clarifies why two objectives of the same nominal magnification and NA can yield noticeably different images.

Cover glass thickness and correction collars. Many high-NA transmitted-light objectives are designed for a standard cover glass thickness, commonly around 0.17 mm (often denoted as #1.5). Departures from the design thickness introduce spherical aberration, particularly at high NA, which broadens the PSF and reduces contrast. Objectives with a correction collar allow you to compensate within a specified range of thicknesses by mechanically adjusting internal lens spacing. For aqueous imaging with water-immersion objectives, matching the refractive index and intended cover glass thickness can materially improve performance.

Artist: QuodScripsiScripsi

Parfocal distance and mechanical compatibility. Objectives are built to industry-standard mechanical interfaces and parfocal distances in many systems, facilitating interchangeability. However, using objectives outside their intended tube lens focal lengths (in infinity systems) or with non-matching relay optics can change their effective magnification and field correction. Consult your microscope’s optical design to maintain the intended performance envelope when mixing components, and refer back to Sampling and Cameras to reassess pixel scaling if effective magnification changes.

Calibrating Magnification and Measuring Features Correctly

Interpreting images often involves measuring feature sizes or distances on the specimen. To avoid systematic errors, calibrate the relationship between image scale (pixels or reticle marks) and object distance under the specific optical configuration in use.

Stage micrometer for visual and camera calibration. A stage micrometer provides a precisely ruled scale on a slide, frequently spanning a millimeter with subdivisions (e.g., 10 µm increments). By imaging the micrometer under a given objective, immersion medium, and adapter, you can determine how many pixels correspond to a known distance. The calibration factor then applies to images captured under the same configuration.

Ocular reticle calibration. For visual measurements, an eyepiece fitted with a reticle must be calibrated for each objective. Superimpose the reticle on the stage micrometer and note how many reticle divisions match a known micrometer distance. The ratio yields a conversion from reticle units to micrometers for that objective. Because the reticle resides in the eyepiece and the objective magnification changes with objective switching, the calibration is objective-specific.

Pixel calibration and metadata. For digital images, the calibration process yields a pixel size in object space (e.g., 172.5 nm/pixel as in the example in Sampling and Cameras). Many acquisition software packages store this calibration in image metadata. Be mindful that changing any element that affects effective magnification—objective, tube lens, camera relay, or camera binning—invalidates the prior calibration until re-measured.

Perspective and tilt errors. Measurements assume the specimen plane is orthogonal to the optical axis and that the feature lies in the plane of focus. Tilted specimens produce foreshortened distances in the image. For thick specimens, ensure you are measuring in the focal plane of the feature of interest or use appropriate 3D imaging methods if axial distances are required. While these are practical concerns, they reflect fundamental projection geometry rather than procedural nuance.

Reporting measurements. When documenting measurements from micrographs, include the relevant imaging parameters: objective magnification and NA, immersion medium, wavelength band (if known), pixel size in object space, and any image processing that could alter distances (e.g., resampling). This contextualizes your reported values and enables reproducibility.

Common Microscopy Misconceptions and How to Fix Them

Even experienced users can internalize rules of thumb that are broadly true but dangerously incomplete. Here are recurring misconceptions and clarifications linked to the relevant sections above.

- “Higher magnification always means higher resolution.” False. Resolution is limited by NA and wavelength. Increasing magnification alone cannot recover detail beyond the optical cutoff. See Magnification vs. Resolving Power.

- “All NA values are comparable regardless of medium.” Incomplete. NA depends on

n; an NA of 1.2 in water immersion and 1.2 in oil immersion involve different refractive indices, and performance with respect to specimen refractive index and spherical aberration can differ. Review Numerical Aperture Deep Dive. - “Closing the condenser increases resolution.” Partly true but mostly false. Stopping down increases depth of field and contrast but reduces the range of illumination angles, discarding high spatial frequencies and thus reducing the maximum achievable resolution in brightfield. See Contrast and Illumination.

- “A 4K camera gives four times the resolution of a 1K camera.” Not if the optics are the limit. If the optics or sampling at the specimen plane already cap spatial detail, adding more pixels only increases image size. Check Sampling and Cameras.

- “Shorter exposure improves resolution.” Exposure time affects motion blur and noise but not the diffraction limit. Resolution depends on NA, wavelength, and aberrations. Exposure affects image quality, not the diffraction-limited point-spread function.

- “Cover glass thickness doesn’t matter for high-NA objectives.” It does. Deviations from the design thickness introduce spherical aberration that degrades the PSF and reduces contrast. Correction collars can help; see Objective Lens Trade-offs.

- “Phase contrast and DIC increase resolution.” They primarily increase contrast for phase objects, enhancing visibility near the resolution limit, but they do not surpass the diffraction limit set by NA and wavelength. Refer to Contrast and Illumination.

Frequently Asked Questions

How do I choose between water and oil immersion for high-NA imaging?

Choose the immersion medium that matches your specimen’s optical environment and the objective’s design. Oil immersion offers the highest NA values in standard light microscopy and thus the finest diffraction-limited resolution on thin, cover-slipped specimens matched to the objective’s design thickness. Water immersion better matches the refractive index of live, aqueous specimens and can reduce spherical aberration when imaging into aqueous media or slightly deeper into samples. In either case, ensure the cover glass thickness and immersion medium are those intended by the objective, and verify performance by assessing contrast and sharpness in a known test specimen. For background on how immersion affects NA directly, see Numerical Aperture Deep Dive.

What pixel size should I target for my camera to avoid under-sampling?

Estimate the Nyquist-limited pixel size in object space using p_object ≤ λ / (4 · NA), with λ taken as a representative wavelength of your imaging band. Then choose effective magnification and sensor pixel size so that p_object meets this criterion. For example, with NA = 1.0 and λ = 550 nm, p_object should be ≤ 137.5 nm. You can meet this either by using smaller physical pixels or by increasing effective magnification at the sensor. If you must compromise, it is generally better to be slightly oversampled than undersampled, provided that field of view and signal-to-noise remain acceptable. More detail and a worked example appear in Sampling and Cameras.

Final Thoughts on Choosing the Right Optical Parameters for Microscopy

Successful microscopy is a balancing act among numerical aperture, wavelength, magnification, illumination, and sampling. NA and wavelength set the fundamental resolution limits; illumination and contrast determine whether that detail is visible; magnification and pixel size scale the image so the available information is neither squandered nor oversold. If you keep these relationships front and center—NA = n · sin(θ), d ≈ 0.61 · λ / NA, p_object ≤ λ / (4 · NA)—you will configure systems that make the most of your optics without succumbing to empty magnification or needless undersampling.

As you evaluate objectives and camera configurations, refer back to the conceptual anchors in diffraction-limited resolution and Nyquist sampling. A small number of physically grounded checks can save hours of trial and error: match condenser and objective NA under Köhler when appropriate, respect cover glass and immersion specifications, and calibrate your pixel size whenever you change the optical path. With these habits, you can approach new specimens with confidence that what you see is as close to the truth as your optics allow.

If you found this guide useful, consider exploring related articles on optical contrast methods and image formation theory, and subscribe to our newsletter for future deep dives into microscope fundamentals, accessories, and applications.